Data Diets: Why Sampling Can Make or Break Your AI Models

In the world of AI, Machine Learning, and Deep Learning, we’re obsessed with Big Data. But here is the dirty little secret: training a model on all the data is rarely practical, cost-effective, or even beneficial.

Whether you are fine-tuning a massive LLM or training a computer vision model for edge devices, sampling is your best friend. It reduces computational overhead, speeds up iteration cycles, and, most importantly, helps mitigate bias and overfitting. But picking the wrong sampling method? That’s a fast track to a model that fails miserably in production.

Let’s break down the five core sampling methods, when to use them, and their pros and cons specifically for AI/ML/DL workflows.

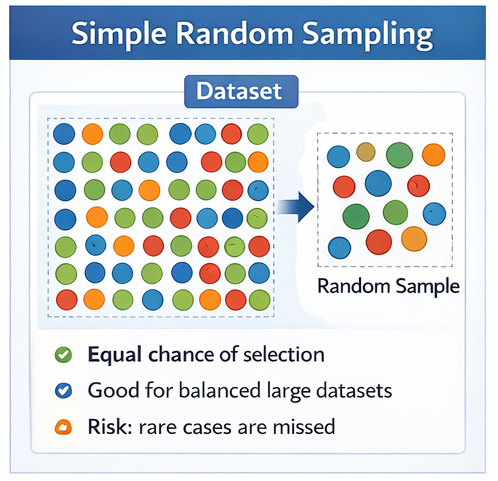

1. Simple Random Sampling

The Concept: Every individual data point in your population has an equal chance of being selected.

AI/DL Use Case: Creating standard train/validation/test splits for massive, highly homogenous datasets (like a massive corpus of standard English text for basic NLP tasks).

Pros: It is completely unbiased, mathematically simple to implement (just a simple train_test_split), and ensures a fair baseline.

Cons: If your dataset has rare edge cases, simple random sampling will likely miss them, leading to poor model generalization for those specific cases.

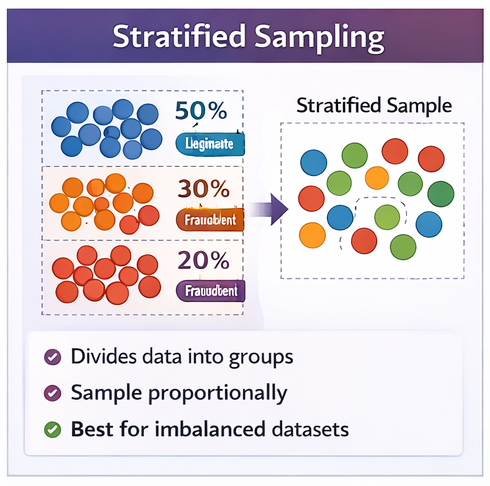

2. Stratified Sampling

The Concept: The population is divided into distinct subgroups (strata) based on characteristics, and random samples are pulled from each.

AI/DL Use Case: Handling highly imbalanced datasets. Think fraud detection (where 99% of transactions are legit and 1% are fraudulent) or medical image classification. You stratify to ensure your training batch contains a proportional amount of that crucial 1%.

Pros: Ensures minority classes are actually represented in your training data, drastically increasing the precision and recall of your model on rare events.

Cons: Requires you to have rich metadata and a priori knowledge of your population characteristics before you even start the pipeline.

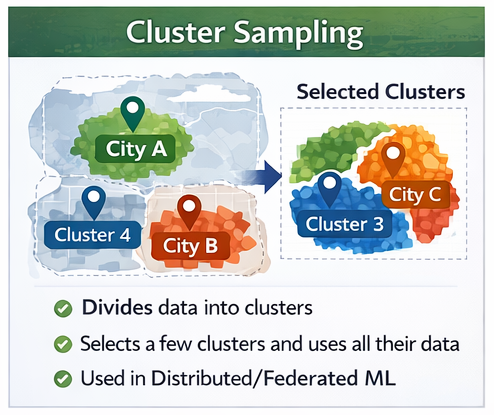

3. Cluster Sampling

The Concept: The population is divided into clusters (often geographic or structural), and whole clusters are randomly selected for the sample.

AI/DL Use Case: Distributed ML and Federated Learning. Imagine training a self-driving car model using sensor data. Instead of randomly sampling individual frames across the whole world, you randomly select specific cities (clusters) and use all the data from those cities.

Pros: Highly cost-effective and efficient for geographically dispersed data. It minimizes data transfer costs and respects the natural grouping of the data.

Cons: Lower precision. If you only sample driving data from sunny clusters (like Phoenix and LA), your model is going to crash the first time it sees snow in Chicago.

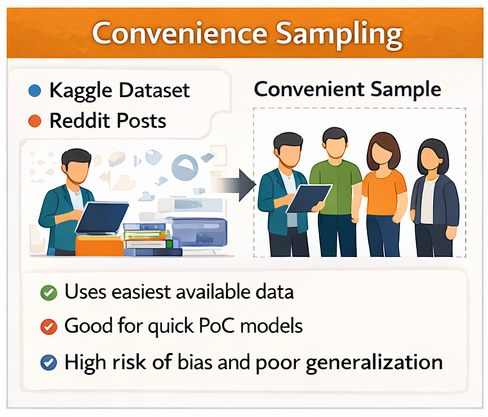

4. Convenience Sampling

The Concept: Selection is based purely on accessibility and availability.

AI/DL Use Case: Bootstrapping a proof-of-concept (PoC) model. This is the classic “I scraped Reddit and downloaded a Kaggle dataset” approach. It’s often used in early-stage NLP or computer vision projects where any data is better than no data.

Pros: Incredibly fast, cheap, and easy. It lets you get a baseline model up and running in hours instead of weeks.

Cons: Massive risk of bias. Models trained on convenience data almost never generalize well to the real world because the data is rarely representative of the true user base.

5. Snowball Sampling

The Concept: Recruiting samples through existing participants or data points.

AI/DL Use Case: Specialized AI for niche domains. For example, building a speech recognition model for a rare, undocumented dialect. You find one speaker, record them, and have them introduce you to other speakers in their network to build the corpus.

Pros: It is often the only way to access hidden, rare, or highly protected datasets that don’t exist in open-source repositories.

Cons: High risk of network bias. Your model might end up hyper-optimized for the specific quirks of a tight-knit community rather than the broader subgroup.

The Takeaway

Great AI isn’t just about the architecture; it’s about the data diet you feed it. Next time you’re setting up a data pipeline, don’t just blindly shuffle your dataset—choose a sampling strategy that aligns with your model’s real-world mission.